Torus Tuesday #14 — Sketches, Vignettes, and Seamless Transitions

We’re hard at work on exciting new content for The Torus Syndicate. As we do so — as we’ve done since the project’s start — we need to be mindful of the unique challenges that come with making a virtual reality experience as seamless and immersive as possible. Take, for example, the The Torus Syndicate‘s introduction. It sets the game’s stage by jumping the player through a few different episodes from the life of the protagonist, Lucas Lawson. They visit his home and learn of his motivations for joining the police force. They experience going through the police academy and learn to the play the game. And they graduate alongside the rest of their class, ready to investigate the dramatic underworld of the Torus Syndicate. We wanted the experience to be utterly seamless, to give the player the sense that they were being whisked from vignette to vignette without loading screen or hiccup.

If the game weren’t a VR game, we might be able to whisk the player away simply by building each vignette (or “sketch,” as we call them internally) into its own level. Normally, the game has to load each level just before the player enters it, but each sketch in the introduction isn’t so intense as to require significant loading time. Yes, we might skip a few frames and induce a momentary pause, but not enough to require a loading screen. In a virtual reality headset, though, that momentary pause takes on a whole new look-and-feel. A brief enough pause might cause the game to “tear” a bit, as the video output is no longer completely in sync with the motion of the player’s head. That’s not pleasant, so, if the SteamVR platform detects that the loss of up-to-date video is bad enough, the player will get kicked out for the duration to a static VR environment that the computer can quickly render. The player won’t experience any nausea, but, for the duration, they also won’t experience the game.

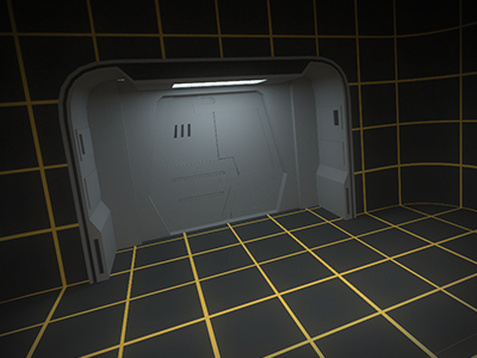

Our dev computers use a holodeck as the fallback, static VR environment, but, as cool as it is, we’d rather be playing the game.

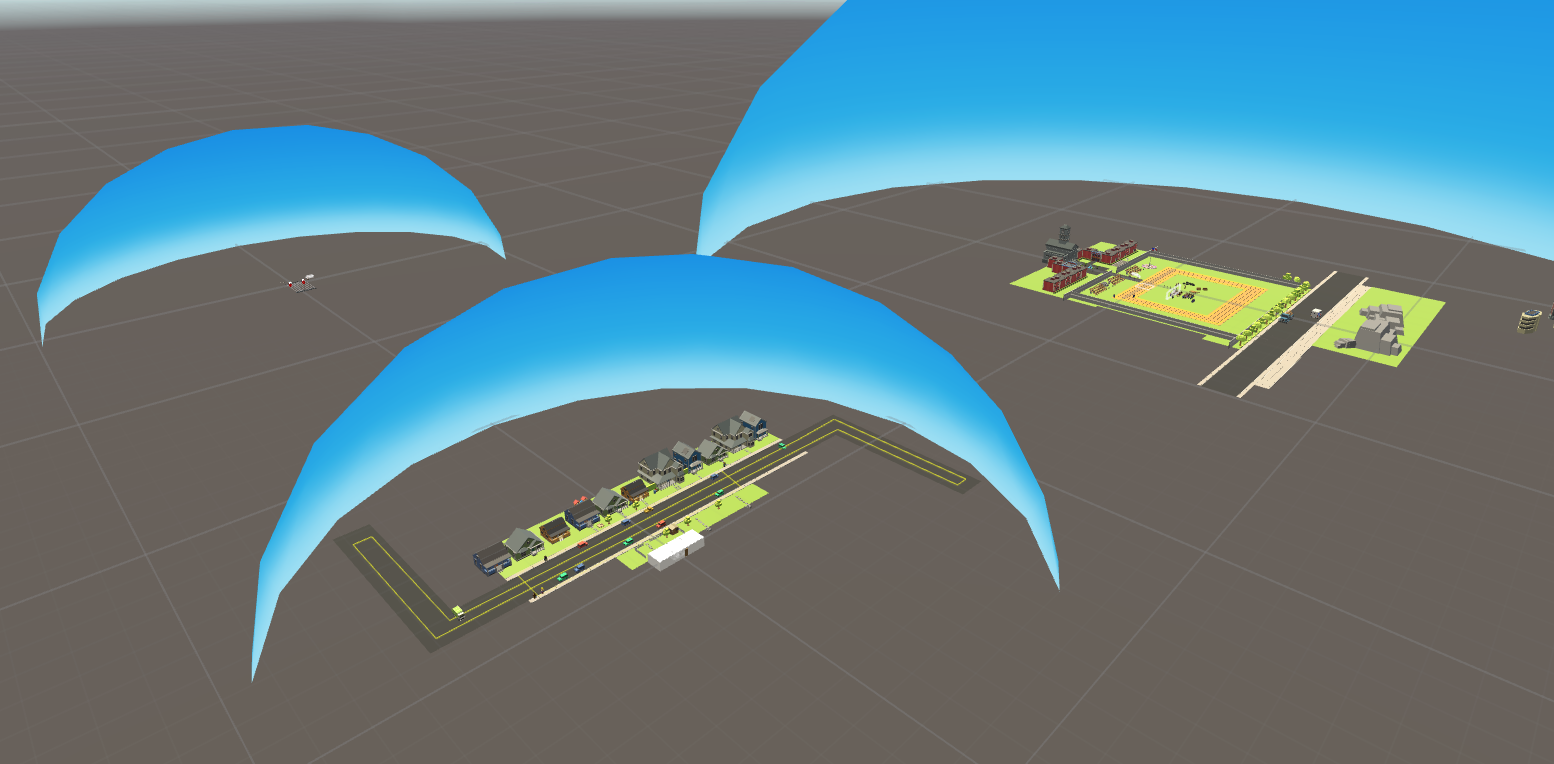

To keep them completely in the game without hiccup, we decided to keep all the sketches in the same level so they can all be loaded at once in the very beginning. If you could take a birds-eye view of the introduction, you’d see each sketch near each other. They’re self-contained with their own skyboxes to prevent players from seeing out into another sketch. Each sketch also has a separate transition space; this is where we put the title text that the player sees before they arrive at the next scene. It’s all wired up into the so-called SketchManager. When a player’s ready to move to the next big thing in Lucas’s life, the SketchManager jumps into action. It fades the screen to black and instantaneously moves the player into transition then repeats the process after a short delay to move them into the next sketch. For the player, there’s no loading screens, no tearing, and no pauses. It’s as seamless as teleporting from one place to the next — and, if you’ve read one of my many posts on locomotion in The Torus Syndicate, then you know that makes for an immersive experience.

Lucas Lawson grew up in a strange town. The police academy, with its own sky, is on the right; his home street is in the middle.

Hurray! The Torus Syndicate is now on Steam.

Check out our store page and buy our game if you’ve been enjoying these posts!

Torus Tuesday #13 – The Proving Grounds

Moving past the hustle of our early access release, the team is now focused on creating new content.

Our biggest goal right now is to extend the replayabilty of The Torus Syndicate, by taking the core mechanic of fighting enemies, taking cover, and moving between areas, and applying different parameters around it to create new modes that deliver more of the core experience.

To that effect, we’re building 2 new modes; Survival, where enemies continuously attack in greater and more advanced numbers until the player loses, and Time Trials, where players complete various challenges to get the best time or the highest score.

We’ll be showcasing more of Survival mode in the coming weeks, but right now, we have a sneak peak of the first Time Trial Level:

In this challenge, players will shoot at pop-up targets and compete for the best time.

The new area will come with new achievements and Steam Leaderboard support.

Target positions are randomized each time, requiring quick reflexes over memorization.

This update should be available to play sometime this week. We’re excited to see how quickly our players can complete the course!

Hurray! The Torus Syndicate is now on Steam.

Check out our store page and buy our game if you’ve been enjoying these posts!

Torus Tuesday #12 — Adapting to the Player’s Setup and Goals

It’s been about a week since the launch of our first title, The Torus Syndicate. We’ve thoroughly appreciated watching so many people enjoy the game. One of the little details that we think contributed to the positive responses we’ve seen is how the game adapts to the each player’s individual setup and adjusts for each task they’re trying to achieve. Curated Locomotion (the game’s teleportation system, about which I’ve talked about in a series of posts) is a perfect example. It uses the play area’s shape and size to fine-tune each destination so that the player can best take advantage of the environment to accomplish their goal.

When players move into a firefight, Curated Locomotion ensures they’ll have ready access to cover.

When breaching a door, Curated Locomotion ensures that players have plenty of play space in the room ahead.

The fine-tuning mechanism is baked into the process through which we design each level. The process starts out pretty standard with laying out the map and populating it with scenery, much of which our players duck behind as cover while they fight through the world. We also configure the NODE AI Director, giving it an idea of what kind of challenge we want to throw at the player. Each playthrough might be different, but the broad strokes are locked down enough that we can tell which locations provide the most tactical advantages. In fact, that’s exactly what we do. As we install each locomotion site, we place a few different prototype play areas of varying dimensions. When a site becomes available for teleport, the game finds the prototype that best fits the actual play area and adjusts the site accordingly.

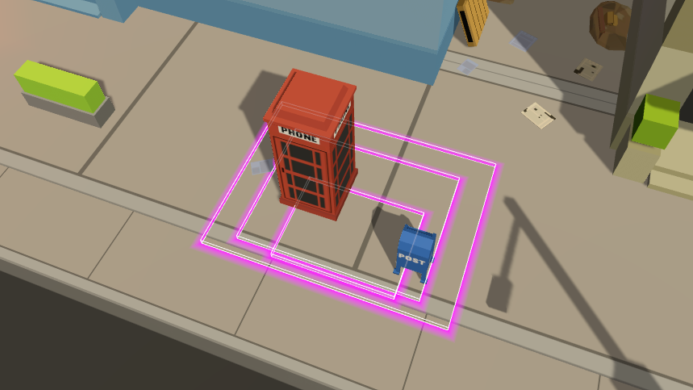

For this street fight, prototype play areas are arranged so that small ones don’t waste any space with solid objects, like the phone booth and mailbox, while still providing adequate access to cover.

These adjustments allow us to hand-craft each situation and accommodate a wide range of VR setups. Whether a player is peeking out from behind a car to take down bad guys or breaching a hotel room, we’ve made sure that everything they need is always readily available. And that definitely helps make for a seamless — and frustration-free — experience.

Hurray! The Torus Syndicate is now on Steam.

Check out our store page and buy our game if you’ve been enjoying these posts!

Torus Tuesday #11 — First Milestone

After 14 weeks of development, the time has finally come to our first milestone; The release of The Torus Syndicate on Steam!

We here at Codeate is incredibly proud of our first shipped title, and hope that it’ll be the precursor to new and exciting things to come! It has been overwhelming to see people around the world trying the game for the first time, and getting their reactions.

The way forward

From this point, the goal of the next update will be focused on rounding out the play-ability of the campaign thus far, as well as introduce a survival mode to provide replay value. This work will form a foundation with which we’ll continue our work on our more ambitious goals, such as co-op multiplayer and character customization. We expect this major update to drop around mid-late December.

One of the coolest things about working in the VR space is discovering and sharing new ways to interact with this new medium. We recently posted this article where we discussed a gaze method we’re calling cone-casting, which makes the gaze system work consistently, independent of the distance that the object is from the eye. We would definitely be continuing along this path of open sharing and inclusion that VR certainly needs right now.

That’s it for now: If you’ve purchased the game, fantastic and thank you for the support, if you have not, we hope you’ll give it a try at some point! In the meantime, check out the following reviews on our game.

Check out @CodeateLLC #TheTorusSyndicate review out now https://t.co/k79KkS44uK

— CryMor Gaming (@CrymorGaming) November 22, 2016

Shout out to @TerribleTall for the wildly entertaining review of #theTorusSyndicate #VR!https://t.co/GKs5zWBwHa

— CodeateLLC (@CodeateLLC) November 23, 2016

Hurray! The Torus Syndicate is now on Steam.

Check out our store page and buy our game if you’ve been enjoying these posts!

Torus Tuesday #10 — Cone-casting: A more human gaze system

The Torus Syndicate launch is just around the corner! One thing players will notice when they get their hands on a copy is the importance of gaze in the game. A person’s eyes provide a window into their focus and intent; they generally look towards what they want, where they want to go, and with whom they want to speak. Gaze input takes advantage of how people naturally behave and, when done correctly, makes for a truly intuitive and immersive input mechanism.

Doing it correctly, as it often turns out, is easier said then done, and that starts with figuring out exactly at what the player is gazing. The simplest solution — the one we’ve almost exclusively seen in the wild — is to cast a ray forward from the player’s head; when it collides with something, then that’s what the player is looking at. Early versions of The Torus Syndicate used this logic. Early play testers of The Torus Syndicate did not like it.

Play testers had trouble actually triggering the gaze mechanism. That invisible ray would often just miss the side of what they were trying to select, with small or far-away objects exacerbating the situation. Part of the problem lies in that fact that today’s commercially-available VR headsets don’t do eye tracking. They know the position and orientation of the player’s head with sub-millimeter and -degree accuracy, but they know nothing about what their eyes are actually doing. People look with their eyes more so than just their heads, so the VR headsets can only give us a rough approximation about what the players are actually trying to look at. We could instruct players to keep their eyes dead center in their sockets and move only their heads when gazing, but we wanted people to feel like they had actually become our game’s human protagonist. Instead, we were making them feel more like owls.

Players shouldn’t have to act like this owl to get the best experience.

Our first attempt at restoring our players’ humanity was to give them bigger targets to hit. We divorced an object’s gaze size from its real size, making them appear larger solely for the purposes of the gaze mechanism. However, we now had the opposite problem: our gaze system, which once thought that players were gazing at nothing special, now thought that the players were gazing at many things special. Sometimes, players would try to talk to someone, but the gaze system would misread them and accidentally teleport them across the map. We quickly realized that we simply weren’t taking into consideration how perspective actually works. As an object gets farther from the player, it appears smaller and becomes harder to hit. So long as our compensation mechanism doesn’t vary depending on an object’s distance, the gaze system would require an inconsistent (and sometimes impossible) level of precision that would be frustrating to the player.

One way to make for a consistent and frustration-free experience is to dynamically change the gaze size of all gazeable objects depending on where the player is. That is, if an object is far away, make it appear larger to the gaze system so that it’s as easy to pick out as a close object. This is an awkward solution, the most problematic aspect of which is that it simply won’t work if there are multiple players. Fortunately, we found another way by imagining the player’s gaze as a cone extending out from their forehead, with everything inside the cone considered to be in the player’s gaze. Up close, the cone only encompasses a small area. Far away, it grows to encompass a larger area. This is really just taking that awkward change-the-size-based-on-the-player’s-position solution we just talked about and flipping it inside out. However, instead of enlarging far-away objects to make them easier to pick out with a thin line, we keep the objects the same size and pick them out with a cone that gets thicker with distance to compensate for the effects of perspective. The results are the same: an even sensitivity independent of where an object is in relation to the player. With a cone, though, we can keep multiplayer on the table.

The green figures animate as the player’s gaze passes over them.

Unfortunately, cone casting isn’t as commonly supported by commercially-available 3D game engines as ray and sphere casting are. That’s not surprising. The calculations involved in checking if a collision occurs between an object and a ray or sphere are simpler (and computationally less expensive) than between the same object and a cone. The workaround, though, was surprisingly simple, if only in retrospect. We attached a virtual, invisible cone to the player’s forehead. The cone is set to only interact with the special gaze colliders attached to objects ready for player manipulation, which helps to keep resource consumption and spurious physics events to a minimum. As the player looks around, the volume sweeps across space. When an object collides with it, we can be pretty confident that it’s entered the player’s gaze.

Knowing what the player is gazing at has proven indispensable to making our players’ experience as immersive as possible. Each element in the game can sense when they’re looking at it and act accordingly. It’s almost like the world bends to our players’ mere thoughts, and that’s absolutely the kind of world we’ve set out to build.

Hurray! The Torus Syndicate is now on Steam.

Check out our store page and buy our game if you’ve been enjoying these posts!

Torus Tuesday #9 — A lesson in tossing

Early in the design of The Torus Syndicate, we knew that we wanted some sort of object that the players can throw in the game; This object would provide alternative combat options that required some skill to use, but results in a great payoff if placed/timed right. The goal was to create meaningful choices for the player while they’re playing the game; Whether to use the powers now and risk not having it when a more challenging scenario occurs, or to use it now in order to get past a particularly tough situation.

The initial approach we went towards is to simply let the players pick up the object and throw it. It was the intuitive solution; Since the players now have hands; Why not let them actually toss the object? Surely it would increase immersion!

As it turns out, the mechanic was a double-edged sword; While it was certainly awesome to chuck a grenade across the world into the feet of some poor NPC, it was also incredibly hard to do so with any kind of accuracy. After a couple throws, we also found it to be quite tiring if the grenade needed to go somewhere far away. On top of that, it was also really inconvenient to use, requiring the player to do multiple steps in order to actually use the grenades.

Stepping back from the ledge of bad game mechanics disguised as new immersive experiences, we had to ask ourselves the question; Is it too much? Are we getting what we want?

In the initial goal, we know that a successful player should be able to use this tool in a skillful manner. Having the player toss the grenade introduces a mechanic that is so variable that a skilled player might still fail in their task from time to time, and a novice player has practically no hope of the item doing what the player wanted to do. This isn’t fun, it’s the definition of frustrating.

The example above goes back to one of the earlier topics about mastering the craft; Being a master requires not just knowing what to put in, but what to leave out, as well. A director doesn’t use every type of cut or transition he/she knows simply because it’s a film. Instead, the good director chooses the types of cuts and transitions that best communicate the goal of the movie, which is to deliver a story.

In a similar vein, we realized that we needed a different way to deliver the gameplay we wanted. We eventually repurposed a common teleportation approach in VR; Using the user interface of a parabolic arc teleporter, we had a method to show the player the trajectory and landing location of their grenade using a single button press, and provide real-time feedback to the player on how changes to their controller orientation would affect the trajectory. This fits the design goal of providing timely and effective usage of the tool, it was also accessible to all players (independent of their strength) and saved us significant amounts of time than if we implemented another system. It’s certainly a more game-y design, but we think it’s an appropriate trade-off when we see it through the perspective of usability.

Hurray! The Torus Syndicate is now on Steam.

Check out our store page and buy our game if you’ve been enjoying these posts!

Torus Tuesday #8 — Gameplay Teaser Trailer #1

Happy Torus Tuesday!

We here at Codeate are celebrating our two-month anniversary of the Torus Tuesday Dev Blog. It has been a joy to write about our journey developing for virtual reality as well as share some of our thoughts regarding the interesting interaction problems that generating VR content entails.

We’ve spent the past few weeks talking about the process of developing The Torus Syndicate and the new ideas we intend to introduce through it. For all those pages of texts and brief animations, however, we’ve only given you a few tantalizing glimpses of the actual game. Today will be different, as we’re now presenting our first ever teaser for the game.

The Torus Syndicate is an intense, non-stop arcade rail-shooter built from the ground up for room-scale virtual reality. It follows Lucas Lawson, a rookie cop swept into the dramatic underworld of the Torus Syndicate. Players put their shooting, dodging, and tactical skills to the test as they battle across an urban landscape in a quest for justice.

We’re so excited to be sharing our game with the world in just a few weeks under Steam as an Early Access title. Stay tuned!

Torus Tuesday #7 — The Toys of Torus Syndicate

In a previous post, I spoke about the concept of world building, and how it is a crucial component to making games feel immersive, especially in VR. Today I want to build on the idea of world building by introducing the need for VR games to have toys.

If you look around, toys exist everywhere in our world; Chances are, there’s a toy sitting on your desk at work, or a toy somewhere in your living room. It might be prudent, however, to define what a toy is, before continuing.

A toy is something you play with.

It’s simple as that. A toy is an object that can be engaged with, for the purpose of deriving some pleasure from it. A simple coin can be played by spinning it; Or a pen by twirling. It is surprising how often humans engage in play without even fully realizing it. Perhaps it is at the core of human nature to play with toys. Just as it is human nature to wonder what a button or a knob does when we encounter something unknown, we are constantly on the lookout for something that engages our need for play.

I believe the same holds true for virtual reality experiences. A convincing world should have toys; Things that can be picked up, manipulated, and get some sort of feedback. During testing, players are often disappointed when they learn that they can pick up some objects, but not all objects. In a way, this is restriction through empowerment; Once the player is aware of their ability to pick up objects, they are more disappointed at its limited use then if they were not able to pick up any objects at all!

We took the idea seriously, and started adding toys to the world. Some are obvious, such as this beer bottle that spills liquid when turned over (And destroys your computer if you spill said liquid on it):

To making aspects of the world react when the player shoots at it during the game, such as hitting these explosive canisters:

It is important here to separate the concept of toy and game. Although both is meant to be played and hopefully fun, games have the distinction of a goal and can be won or lost. A tennis ball can be a toy because you can throw it around, but a tennis game requires rules, points, another player, and most importantly, creates an outcome where some players win and some players lose.

It may become self evident, then, that although toys are an important part making a game immersive, it must not be so distracting as to muddy the goal of the game itself. Wherever possible, the toy should help tell a story, and used as a way to contribute to the overall quality of the game.

We are getting closer and closer to the release of The Torus Syndicate; We’ve got something big to show everyone!

Torus Tuesday #6 — Curated Locomotion Today

I’ve spent the past few weeks talking about Curated Locomotion, our novel method for moving through a large virtual world. We’ve discussed its inspiration and its early days; now, it’s time to talk about what it actually is (or, rather, has become).

When we last left off, Curated Locomotion was stuck on the concept of the teleportation pad. At appropriate times, the player would see curated destinations, each one represented by a disc on the ground: the teleportation pad. In order to best use the player’s physical play area, we would also project another pad near them in the physical location we determined to be most optimal. After selecting the destination pad, the user would stand on the projected pad and complete the teleport. Unfortunately, the pads — especially the projected pad — confused users. We ultimately determined they had to go.

The pads served an important purpose that we couldn’t give up, though: they anchored the player’s position, both in the virtual world and in their play space. The key to removing them was realizing that, at a fundamental level, our real concern was all about that play space. What we were trying to achieve was to place the user’s play space most optimally in the virtual world. We didn’t need the pads so long we could tie the play area to specific virtual locations.

Our solution is similar to the way the pads worked, but sort of in reverse. Instead of projecting the destination pad into the player’s original space, we project the player’s play area into the destination. Each destination is represented by a translucent box showing what the bounds of the play space would be if the player teleported to it. In a way, these teleportation boxes are better than the pads in that players can easily see what they’ll be able to interact with should they choose a particular site.

In another way, though, these plain teleportation boxes miss something that the pads had: they don’t show precisely where the user will end up, which depends on where the player is physically standing. In the best case scenario, that’s disorienting to the user. In the worst case scenario, the user could accidentally teleport into a solid object. Consequently, the Curated Locomotion system projects an avatar into the box that mimics the player’s relative position.

To help guide the user, the avatar changes from its normal green color to red when it is obstructed and teleportation is blocked. Furthermore, the avatar shines through all objects so that, in the event that it is obscured, the player can tell which way to move to unblock it. The user is in control here; they can teleport from any open space in their play area to any unobstructed spot in the curated destinations. Ultimately, we were glad to give some of that control back to the player. While it’s true that losing the pads meant we lost fine-tuned command over the user’s starting position in each teleportation destination, we still are able to keep the user ideally situated in their physical play space in a fairly seamless fashion.

We can do better, though, than just fairly seamless. Instantaneous teleportation is definitionally not smooth; there’s a harsh seam when the player warps in the blink of an eye. We wanted the players to fully experience the world, not feel like they were just jumping through it. We were concerned that Curated Teleportation would only heighten that sensation because the destination sites are often far apart. Fortunately, we noticed that the community solved this problem for us with the “blur sprint.” When players select their target site, the game doesn’t move them there instantly. Instead, the player rapidly moves through the intervening space. We have to severely blur the display to prevent motion sickness, yes, but the players see enough to get a sense that they are traveling through the world and not skipping through it.

That immersion, from blur sprinting and especially from Curated Locomotion, is precisely what started us on this journey in the first place. The end result is a snappy, riveting game. We’re hard at work developing The Torus Syndicate, and we couldn’t be more excited to unveil it in the coming weeks. Stay tuned; it will be worth it!

Torus Tuesday #5 — Handling Handedness in VR

With the recent announcement and pre-orders opening for the Oculus Touch controller, both top-end VR Systems (Oculus Rift & HTC Vive) now have a way for users to track their hand movements. Having worked with the HTC Vive motion controllers, it is obvious why both companies have decided to introduce motion controllers instead of the traditional gamepad; The experience is simply more immersive.

At its core, the addition of hand tracking controllers bring more of the user’s body into the experience. Whereas a headset only VR system allows for verbs like Look, Rotate, Lean, the addition of the controllers add new verbs such as Point, Grab, Throw, and a wide range of ways to manipulate the world around the player that was previously not possible, like Google’s Tilt Brush:

With the introduction of the hand controllers, new questions and challenges have arisen from the its use, in particular, the ergonomics of allowing both left-handed and right-handed users to comfortably use your product. This is an important issue, as we’ve found that although many players are right-handed, there are players who would prefer to use their left hand as their dominant hand. Enabling these users should be a part of a VR designer’s responsibility.

To date, there are a few different approaches to this issue:

- Allow both hands to do all actions: If the design allows for it, this is the most simple method; Players will naturally pick what they’re comfortable with. A example of this are the dual guns/shield in Space Pirate Trainer, which allows the user to pick whatever configuration of gadgets on either hand. For a limited set of potential actions by the player, this is the best approach.

- Inventory based actions: Role playing games like Vanishing Realms use a system where a hand is a generic object that can pick up various items, which give it additional functionality. Players can choose to pick up an item with either hand to suit their needs. This allows for more extensible functionality, at the cost of some complexity to the player. It also presents challenges to the design of the UI, as the equipment in Vanishing Realms is stashed in places that assume a right-handed player. (Swords are stashed to the right side of the body…etc)

- Physically switch controllers: This is relatively simple, but sub-optimal. Physically switching controllers represent an immersion breaking scenario where the player is reminded of their connection to the real world. This should be avoided.

For Torus Syndicate, we’ve decided to create a setting menu that switches the dominant hand. This approach works well for situations where there is a clear dominant and auxiliary hand. This also has the benefit of being easy to implement, as SteamVR handles arbitrary assignments of devices on the fly.

Development is going at a rapid pace here at Codeate; We’re excited to be showing off our levels in just a few weeks!